- 2026.04: 💥💥💥 We release Kimi-K2.6❗️❗️❗️ Frontier-Level Vision TIR❗️❗️❗️ Visualize: K2.6 VTIR Trajectories

- 2026.01: 💥💥💥 We release Kimi-K2.5❗️❗️❗️

- 2025.11: 💥 We release IE-Critic-R1, a MLLM specialized in assessing the quality of text-driven image editing results. It is a Pointwise, Generative Reward Model, leveraging CoT reasoning SFT and RLVR to provide accurate, human-aligned evaluations of image editing.

- 2025.07: 💥💥💥 We release Kimi-K2❗️❗️❗️

- 2025.02: 🎉🎉🎉 ChartMoE is selected as ICLR2025 Oral(1.8%)!

- 2025.01: 🎉🎉 ChartMoE is accepted by ICLR2025!

- 2024.10: 🎉🎉 We release Aria, a native LMM that excels on text, code, image, video, PDF and more!

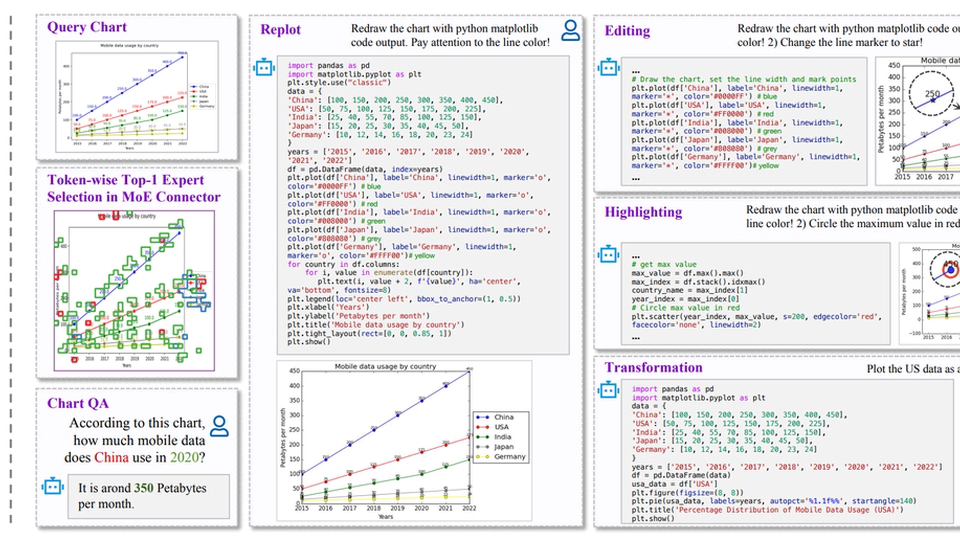

- 2024.09: 💥💥 We release ChartMoE, a MLLM with MoE connector, for advanced chart 1️⃣understanding, 2️⃣replot, 3️⃣editing, 4️⃣highlighting and 5️⃣transformation.

- 2023.12: 💥 MPP-Qwen-Next is released! Prevent poverty (24GB of VRAM) from limiting imagination. All 7B/14B llava-like training is conducted on RTX3090 GPUs by Pipeline Parallel.

About Me

👋Hi, I’m Bowen(Brian) Qu, doing PostTrain at Moonshot.ai (Kimi, 月之暗面). My research interests include MLLM, TIR(Tool-Integrated Reasoning) and Agentic RL. The logo of this website is my lovely cat - Baka (巴卡 in Chinese)!

- Education: I received my MPhil. degree at School of Electronic and Computer Science (SECE), Peking University (PKU), in June, 2025. Previously, I received the Honours B.E. degree at School of Electronic Information and Communications (EIC), Huazhong University of Science and Technology (HUST) in June, 2022.

- Experience: Fortunately, I have the honor to participate in some interesting MLLM research projects:

- [2025.03 - Present] Moonshot.ai, Technical Staff (PostTrain). Cooking K2.5 series:

- 🔹 Vision Agentic RL (K2.6 Blog Part - Visual Agent, Frontier-Level Vision TIR)

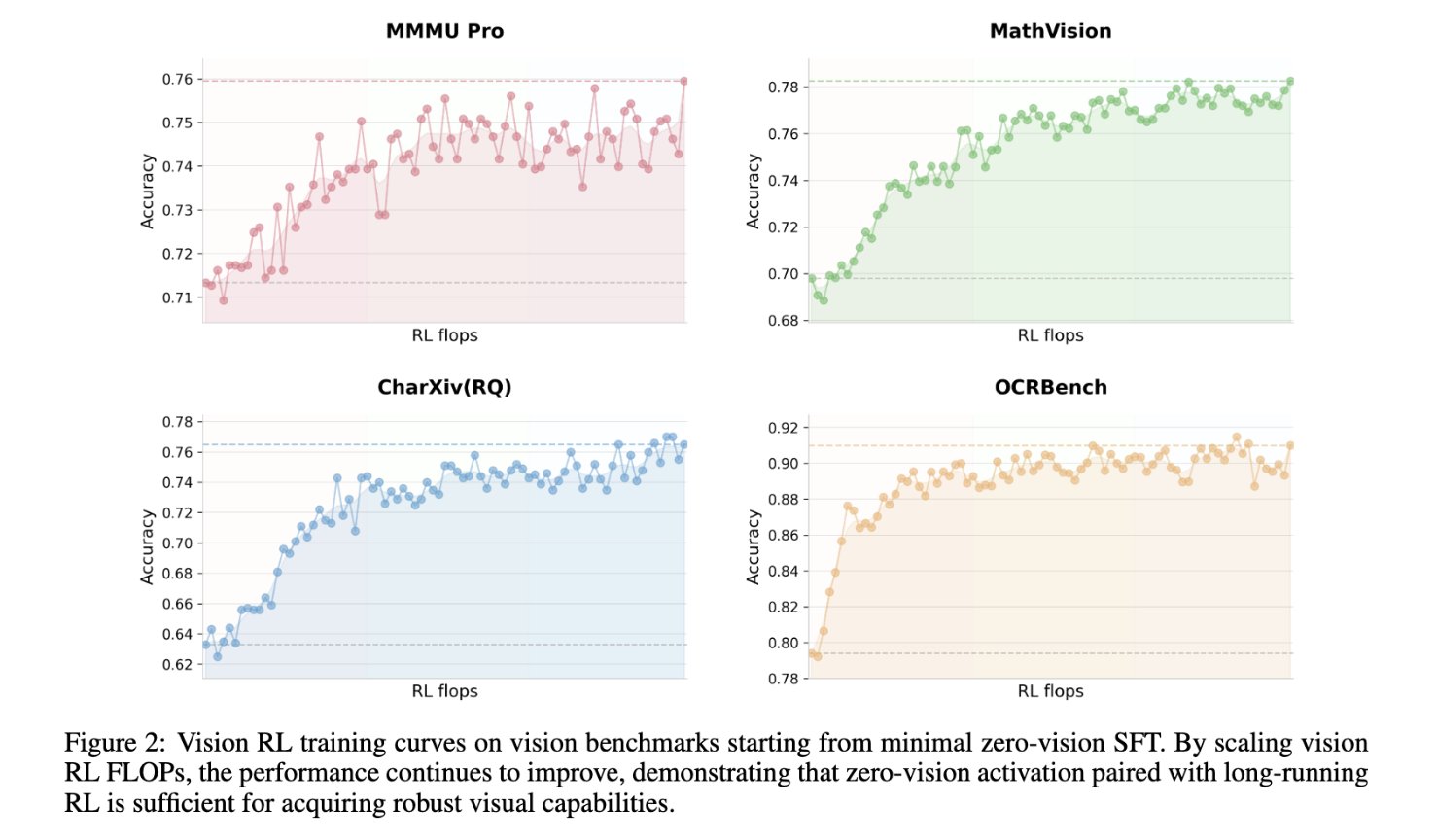

- 🔹 Native Multimodal RL (K2.5 Report Chap2.2, ZeroVision ColdStart -> Vision-Centric RL)

- 🔹 Chart Understanding and Chart-to-Code

- [2024.04 - 2024.12] 01.ai & Rhymes.ai, Research Intern, Multimodal Team, supervised by Junnan Li, working closely with Dongxu Li and Haoning Wu.

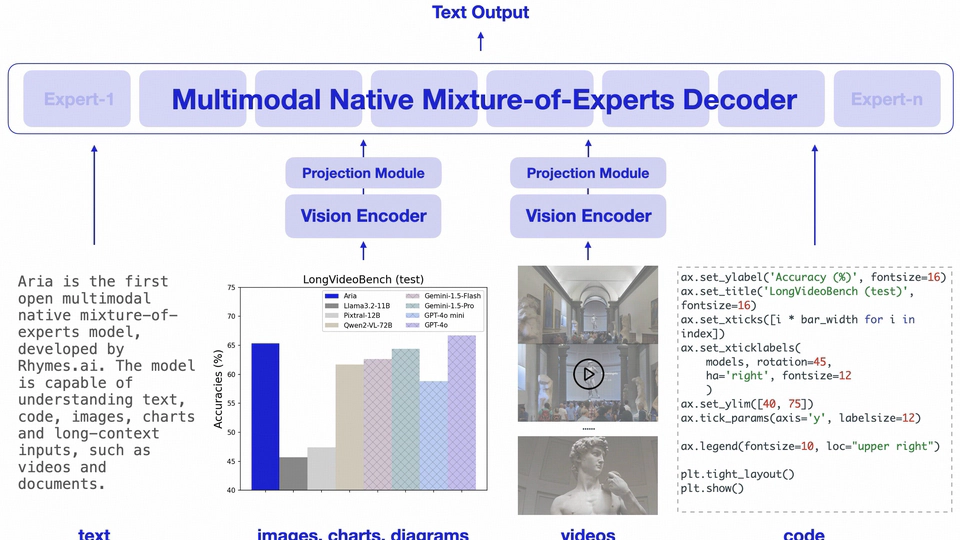

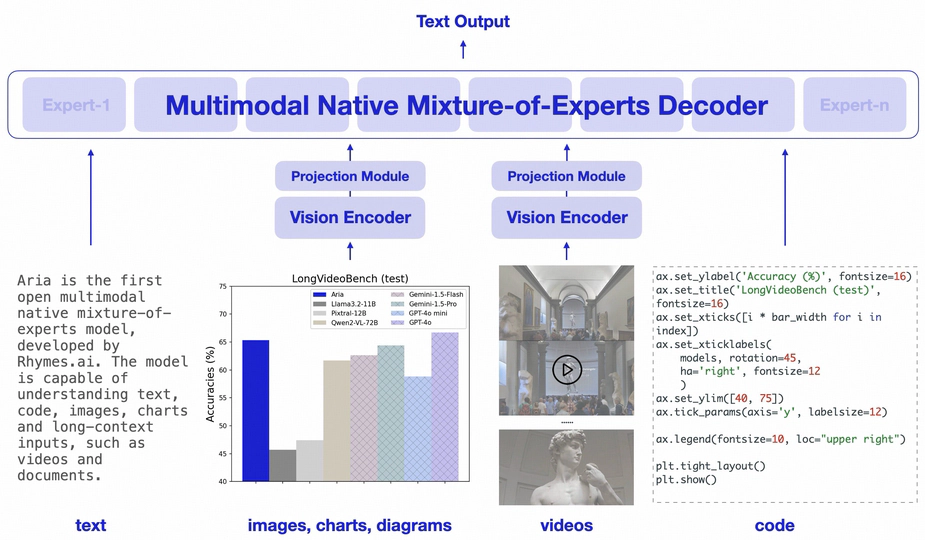

- 🏆 Core Contributor of Aria — an Open Multimodal Native MoE

- 🏆 Core Contributor of Aria — an Open Multimodal Native MoE

- [2024.02 - 2024.07] IDEA Research, Research Intern, working closely with Zhengzhuo Xu, Yiyan Qi and Chengjin Xu.

- 🏆 Co-first Author of ChartMoE (ICLR2025 Oral): Mixture of Diversely Aligned Expert Connector for Chart Understanding

- 🏆 Co-first Author of ChartMoE (ICLR2025 Oral): Mixture of Diversely Aligned Expert Connector for Chart Understanding

- [2025.03 - Present] Moonshot.ai, Technical Staff (PostTrain). Cooking K2.5 series:

- Status: I'm always eager to learn new insights and ideas. The potential of Agents are still under exploration. If you're in Beijing, let's grab coffee to discuss it. Also feel free to drop me an 📧 if there is a good fit!

Interests

- Vision-Language Model(VLM)

- MLLM Reasoning

- Al-Generated Image/Video Quality Assesment

Education

-

Master of Science

Peking University (PKU)

-

Bachelor of Engineering

Huazhong University of Science and Technology (HUST)

🔥 News

Selected Outputs

🌟 is me. * Equal Contribution (i.e.: Co-First Author). 📧 Corresponding Author.

Core-Authored Publications

🌟 is me. * Equal Contribution (i.e.: Co-First Author). 📧 Corresponding Author.

(2025).

IE-Critic-R1: Advancing the Explanatory Measurement of Text-Driven Image Editing for Human Perception Alignment.

arXiv preprint.

(2025).

ChartMoE: Mixture of Diversely Aligned Expert Connector for Chart Understanding.

ICLR2025 Oral (1.8%).

(2024).

Aria: An Open Multimodal Native Mixture-of-Experts Model.

Technical Report.

(2024).

Exploring AIGC Video Quality: A Focus on Visual Harmony, Video-Text Consistency and Domain Distribution Gap.

CVPRW2024.

(2024).

Bringing Textual Prompt to AI-Generated Image Quality Assessment.

ICME2024.

Selected Projects

😺 I enjoy open-sourcing. Here are a selection of projects that I’ve led or served as the core contributor.