About Me

👋Hi, I’m Bowen(Brian) Qu. My research interests include Vision-Language Model and MLLM Reasoning. The logo of this website is my lovely cat - Baka (巴卡 in Chinese)!

- Education: I’m an MPhil. Candidate at School of Electronic and Computer Science (SECE), Peking University (PKU), since 2022 fall. Previously, I received the Honours B.E. degree at School of Electronic Information and Communications (EIC), Huazhong University of Science and Technology (HUST) in June, 2022.

- Experience: Fortunately, I have the honor to participate in some interesting MLLM research projects:

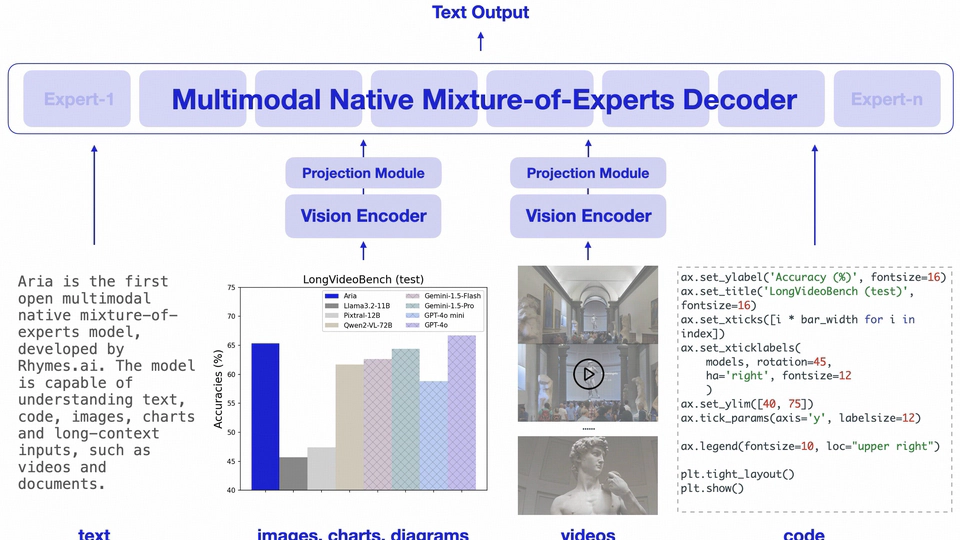

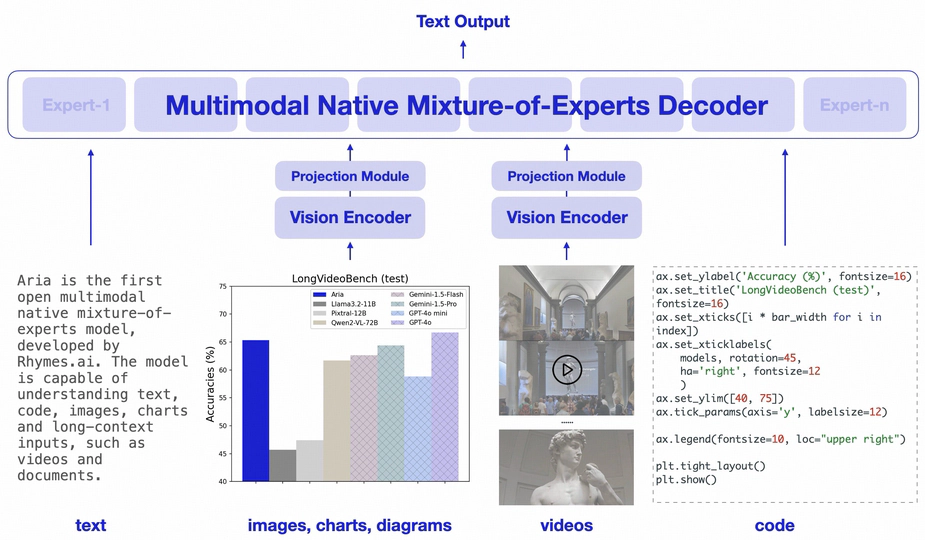

- [2024.05 - 2024.12] 01.ai & Rhymes.ai, Multimodal Team, supervised by Junnan Li, working closely with Dongxu Li and Haoning Wu. Output: Aria (multimodal native MoE)

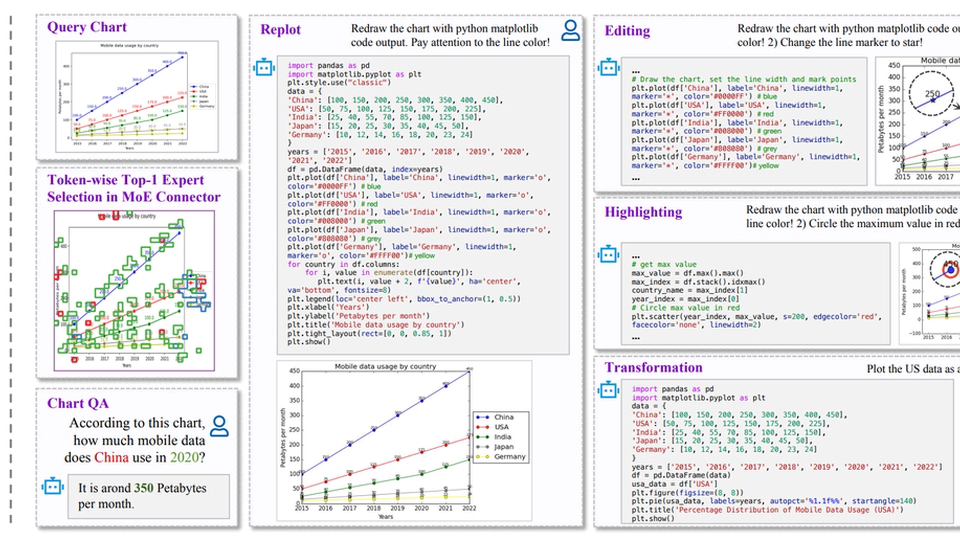

- [2024.02 - 2024.07] IDEA Research, working closely with Zhengzhuo Xu, Yiyan Qi and Chengjin Xu. Output: ChartMoE (ICLR2025 Oral)

- [2024.05 - 2024.12] 01.ai & Rhymes.ai, Multimodal Team, supervised by Junnan Li, working closely with Dongxu Li and Haoning Wu. Output: Aria (multimodal native MoE)

- Status: I expect to receive a master’s degree in June, 2025. I’m open to academic collaboration opportunities. Feel free to drop me an 📧 if there is a good fit!

Interests

- Vision-Language Model(VLM)

- MLLM Reasoning

- Al-Generated Image/Video Quality Assesment

Education

-

Master of Science

Peking University (PKU)

-

Bachelor of Engineering

Huazhong University of Science and Technology (HUST)

🔥 News

- 2025.02: 🎉🎉🎉 ChartMoE is selected as ICLR2025 Oral(1.8%)!

- 2025.01: 🎉🎉 ChartMoE is accepted by ICLR2025!

- 2024.10: 🎉🎉 We release Aria, a native LMM that excels on text, code, image, video, PDF and more!

- 2024.09: 💥💥 We release ChartMoE, a MLLM with MoE connector, for advanced chart 1️⃣understanding, 2️⃣replot, 3️⃣editing, 4️⃣highlighting and 5️⃣transformation.

- 2023.12: 💥 MPP-Qwen-Next is released! Prevent poverty (24GB of VRAM) from limiting imagination. All 7B/14B llava-like training is conducted on RTX3090 GPUs by Pipeline Parallel.

Selected Outputs

🌟 is me. * Equal Contribution (i.e.: Co-First Author). 📧 Corresponding Author.

Core-Authored Publications

🌟 is me. * Equal Contribution (i.e.: Co-First Author). 📧 Corresponding Author.

(2025).

ChartMoE: Mixture of Diversely Aligned Expert Connector for Chart Understanding.

ICLR2025 Oral (1.8%).

(2024).

Aria: An Open Multimodal Native Mixture-of-Experts Model.

Technical Report.

(2024).

Exploring AIGC Video Quality: A Focus on Visual Harmony, Video-Text Consistency and Domain Distribution Gap.

CVPRW2024.

(2024).

Bringing Textual Prompt to AI-Generated Image Quality Assessment.

ICME2024.

Selected Projects

😺 I enjoy open-sourcing. Here are a selection of projects that I’ve led or served as the core contributor.