ChartMoE: Mixture of Diversely Aligned Expert Connector for Chart Understanding

Jan 22, 2025·

,

,

,

,

,

,

·

1 min read

Zhengzuo Xu*

🌟Bowen Qu*

Yiyan Qi*

Sinan Du

Chengjin Xu

Chun Yuan📧

Jian Guo📧

Abstract

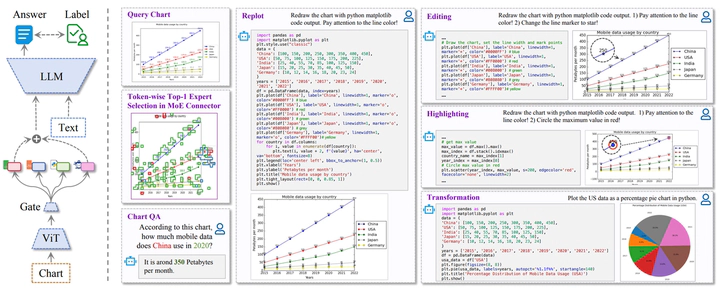

Automatic chart understanding is crucial for content comprehension and document parsing. Multimodal Large Language Models (MLLMs) have demonstrated remarkable capabilities in chart understanding through domain-specific alignment and fine-tuning. However, current MLLMs still struggle to provide faithful data and reliable analysis only based on charts. To address it, we propose

ChartMoE, which employs the Mixture of Expert (MoE) architecture to replace the traditional linear projector to bridge the modality gap. Specifically, we train several linear connectors through distinct alignment tasks, which are utilized as the foundational initialization parameters for different experts. Additionally, we introduce ChartMoE-Align, a dataset with nearly 1 million chart-table-JSON-code quadruples to conduct three alignment tasks (chart-table/JSON/code). Combined with the vanilla connector, we initialize different experts diversely and adopt highquality knowledge learning to further refine the MoE connector and LLM parameters. Extensive experiments demonstrate the effectiveness of the MoE connector and our initialization strategy, e.g., ChartMoE improves the accuracy of the previous state-of-the-art from 80.48% to 84.64% on the ChartQA benchmark.Type

Publication

2025 International Conference on Learning Representations (Oral Presentation, 1.8%)

Click the Cite button above to demo the feature to enable visitors to import publication metadata into their reference management software.